Choosing a WordPress Theme

November 11, 2021Siri Shortcuts for Task Automations

May 6, 2022Like So Many Things

In programming, testing is the process of verifying that something produced works as intended. This seems like something that should be simple. However, everything described in the definition “process of verifying that something produced works as intended” has options and every one of those options have variations. Because of this, programmatic testing is a widely variant discipline.

To complicate things more, consider what testing validates. The difference between the intent of program specifications and the implementation of those specifications is a real issue. Testing to verify that a program meets its specifications can include personally reporting one’s experience live testing or running a programmatic suite of code tests. Either option has many different techniques and variations.

Like so many things, testing software is complex enough that it means something generally specific while also being specifically vague. Almost every project has different implementations and nearly every team has different practices.

What’s important is that we do it. Tested code is reliable code.

Reflexive Value

I appreciate the distinct process of programmatic testing: the act of writing code that tests program code. It started as a project directive, grew into a self-discipline, and eventually became a personal habit. I’ve nurtured this discipline because I find unique benefits from engaging with it. Particularly, this discipline has forced me to dissect, inspect, organize, optimize, and stress my own code – all of which validates and verifies functionality towards more confident production releases and support cycles for the projects that I work on.

Testing is something that gives back to the developer. The practice of specifically evaluating one’s code against its intentions and integrity, makes not only the code better, but also the developer. This reflexive value is unique to most disciplines that I’ve encountered in my life.

From The Top

The strategic element to programmatic testing, the discipline of writing code that tests program code, is determining what scope of the program’s code is relevant to test. Programs are simultaneously integrated software and somewhat broken-down functionality (unless one has discovered the mythical god function).

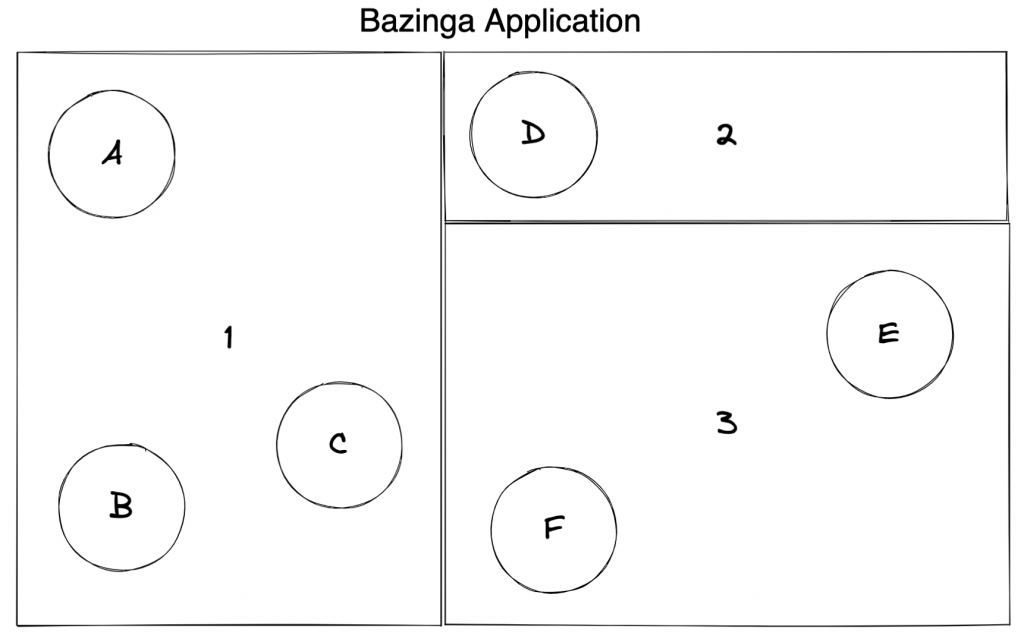

Here is an example of a generic program, broken down by categorized/componentized functionality:

This diagram presents a hypothetical Bazinga Application. It has three modules of the applications (the numbered rectangles), each with their own submodules (the lettered circles). Together, the modules with their submodules compose the overall application. I will be using this template for our discussion.

Bits & Parts

The widest testing scope for a program like this Bazinga Application would be to test the complete functionality from its outermost reach, the points at which producing/consuming systems access this program. This is essential and effective but speaks only towards the intent of the entirety – which has serious drawbacks. When testing the whole, it is easy to miss elements that relate to efficiency and/or side effects at the lower levels of the program. Breaking down the scope into smaller pieces can help identify those.

The narrowest testing scope is the targeted testing of the smallest, single purposed functions in the application. These we will call Bits. The larger portions that depend on these functions while performing larger, multi-faceted operations we will call Parts.

To overly simplify our example using this terminology:

1. We’ll refer to the circular submodules as Bits

2. We’ll refer to the rectangular modules as Parts

3. Together, these comprise the entire program, which we’ll refer to as the Whole

Up The Ladder

In a well-categorized/componentized program (which we are assuming is the case for our Bazinga Application), functional dependency flows from the Whole down to the Parts down to the Bits. When programmatically testing, the thorough approach is to work back up that dependency ladder from the bottom: starting at the Bits, progressing to the Parts, ending at the Whole. This approach ensures that lower-level functionality is verified before we move upwards to functionality that will depend on it. This establishes a consistent chain of verification from the bottom to the top.

Using the Up the Ladder approach, one would programmatically test Bit A (highlighted in green), then Part 1 (highlighted in Orange), and then the Whole (the entire application). This follows the Bit -> Part -> Whole pattern. Assuming the Bazinga Application has clearly layered complexity and functional, Part 1 depends directly on Bit A and the Whole depends directly on Part 1. By starting at the bottom by testing Bit A first, one would verify Part 1’s dependencies before testing Part 1 itself. The same paradigm applies to the relationship between the Whole and the Part 1.

Note: The above example is overly simplified. In a real scenario, one would test all the Bits in Part 1 and then all the Parts (1st testing their Bits) before finally testing the Whole.

Unit Testing: One Is the Loneliest Number

We’ve established an overarching strategy with the Up the Ladder technique. Now let’s address tactics.

The lowest level testing tactic is Unit Testing: the practice of isolating a singular file’s code from the rest of the application and the outside world for the purpose of testing its functionality distinctly. Each individual file we isolate is called SUT, Software Under Test.

To isolate code, one must use Dependency Imitation: the practice of replacing each and every external dependency of the file with a mock, stub, or spy. Dependency Imitation is probably the most disliked part of programmatic testing because it involves the developer creating nonsensical code. Dependency Imitation involves creating fake code that replaces real code. The fake code mimics the behavior of the real code within the specifications required to support the SUT.

Dependency Imitation is rooted in the scope and capabilities of the dependency one is imitating. Bits have a narrow scope of functionality to imitate, narrow enough that the effort required to mock them can seem silly. Conversely, vast dependencies such as a database interface or multifaceted external API can be laborious to effectively imitate. These can be frustrating and difficult to produce quality imitations for.

Once Dependency Imitation is complete, one can properly Unit Test. The Up the Ladder principle also applies to the SUT as there are functions depending on other functions in the same file. Starting with the simplest, non-dependent functions and working up to the most complex, multi-dependent functions ensures that the dependency chain within the file is verified thoroughly.

To be considered Unit Tested, all functions in a file must be tested against their intentional functionality (successes) and reasonable unintentional functionality (failures). The quality of the overall Unit Test suite is dependent on:

- The quality of the Dependency Imitation

- Better mocks/stubs/spies make for more accurate testing scenarios against scenarios regarding those dependencies

- The extent of failure/success scenarios

- Lowest level: simple successes that reflect feature intent.

- Low level: simple failures that reveal how functionality fails.

- High level: complex, multi-faceted successes and failures.

- The functional categorization/breakdown of the SUT

- Code that is broken down into smaller, focused portions is easier to test.

Integration Testing: Come Together

The type of programmatic testing that is a step up from Unit Testing is Integration Testing: the practice of testing integrated combinations of the program’s code while maintaining isolation from the outside world. This type of programmatic testing verifies different combinations of integrated code throughout the application, typically by category of functionality or common purpose. Integration Testing is tempting to skip. It’s common for one to prefer going from Unit Testing to Environment Testing: the practice of testing a program within the context of an environment that includes outside world dependencies. Environment Testing is often referred to as Live Testing and the target environment is tagged on to the name: such as “Live Testing in (DEV, QA, or UAT)”.

Integration Testing is particularly useful, though. Especially when a portion of the program has either highly dependent or categorically similar logic is larger than any particular file. Each file has already been Unit Tested. So, Integration Testing fills the gap between the individual file and the entire application.

An Integration Testing suite representing Part 1 and its internal Bits A-C would be similar as the Unit Testing suite for Part 1 with a key exception that the dependencies Part 1 has on Bits A-C are now integrated, not imitated. The tests we write in Part 1’s Integration Testing suite reflect that SUT’s integration with its Bits.

This example only works when the categorical assumption we’ve established for our Bazinga Application is true for the program. For an Integration Testing suite to be effective, one must be confident that the tests are relevant to a distinct body of functionality that is distinctly represented by their integration together.

Real World Example: Automated Emails

Our company had a classic case regarding a program that published automated emails in different stages of a user’s interaction with it. There were welcome emails, onboarding emails, and other specific emails throughout a user’s lifecycle. The project manager had some concerns about the automated emails during early user experience testing. He asked what could be done to verify the automated email functionality specifically. Together, we came up with an Integration Testing plan that targeted just the portions of the program that pertained to automated emails. It looked something like this:

- Imitated all outside world dependencies, especially email client

- Targeted the trigger points for each automated email that would be sent to a user

- Setup a read mechanism from our email client Dependency Imitation

The suite triggered the automatic email points in the program, then read the generated email from via the read mechanism. The validation portion of the tests analyzed the captured emails for, template, message data, and to/from specifications in accordance with their context.

It took a decent amount of work and a generous amount of time, but was overall valuable to the project manager, who gained significantly improved confidence around this specific functionality in the program.

Stick to What Matters

The above real-world example speaks well to the heart of this post. A lot of issues surrounding program confidence can be improved with the appropriate amount of testing. Let me emphasize this idea of “appropriate”. Within the overall testing plan, there are obvious extreme approaches to programmatic testing.

One extreme is the Overly Thorough Approach, an absurdly high position. It proposes that absolutely every aspect of the program should be programmatically tested thoroughly using every testing tactic available. This would include:

- An absolutely thorough Unit Testing suite

- Every conceivable combination of Integration Testing

- A complete Environment Testing suite (which was not covered in this post).

At some point, the return of the Overly Thorough Approach diminishes dramatically. In every program, some functionality is simple enough that one style of testing or the other provides a reasonable level of confidence. Also, certain combinations of integrations don’t speak uniquely to the scope of practical functionality from their Unit Testing versions. The Overly Thorough Approach needs to be tempered practically.

The other extreme is the Overly Minimal Approach where a singular tactic is used with minimal Dependency Imitation and the simplest success/failure paradigms. This style of testing accomplishes the technicality of programmatically testing with as few of the benefits as possible. I would consider this as close to not testing as possible. The Overly Minimal Approach to programmatic testing needs to be improved practically.

Gaining Confidence

Untested programs generate more anxiety than confidence. This is something that not even the most specific languages and well-developed libraries/frameworks can overcome. Nothing truly replaces the practice of programmatically testing. The key to the success of our projects is testing what matters. This lies between the extremes of the Overly Thorough Approach and the Overly Minimal Approach. The goal is that programmers use the discipline of programmatic testing to build confidence in programs where it matters.